当前位置:网站首页>Pytorch notes (5)

Pytorch notes (5)

2022-07-19 07:53:00 【The soul is on the way】

GoogleNet and ResidualBlock

GoogleNet

GoogLeNet yes 2014 year Christian Szegedy A new deep learning structure is proposed , Before that AlexNet、VGG And other structures are by increasing the depth of the network ( The layer number ) To get better training results , But the increase of the number of layers will bring many negative effects , such as overfit、 The gradient disappears 、 Gradient explosion, etc .inception From another angle to improve the training results : It can make more efficient use of computing resources , More features can be extracted under the same amount of calculation , So as to improve the training results .

GoogLeNet Call the following part inception, Whole GoogLeNet There are many different inception Made of blocks .inception There are two main contributions of structure : First, use. 1x1 The convolution of the two dimensions ; The other is convolution repaggregation on multiple dimensions at the same time .

- 1x1 Convolution

- effect 1: Add more convolutions to the receptive field of the same size , More abundant features can be extracted .

- effect 2: Use 1x1 Convolution for dimensionality reduction , It reduces the computational complexity .

- Convolution repolymerization on multiple sizes

- In the intuitive sense, convolution is carried out on multiple scales at the same time , It can extract features of different scales . Richer features also mean that the final classification judgment is more accurate .

- Using the principle of decomposing sparse matrix into dense matrix to accelerate the convergence speed .

- Hebbin Heb's principle . Because the ultimate goal of training convergence is to extract independent features , So gather the features with strong correlation in advance , Can accelerate convergence .

Code implementation

lass InceptionA(nn.Module):

def __init__(self, in_channels):

super(InceptionA, self).__init__()

self.branch1x1 = nn.Conv2d(in_channels, 16, kernel_size=1)

self.branch5x5_1 = nn.Conv2d(in_channels, 16, kernel_size=1)

self.branch5x5_2 = nn.Conv2d(16, 24, kernel_size=5, padding=2)

self.branch3x3_1 = nn.Conv2d(in_channels, 16, kernel_size=1)

self.branch3x3_2 = nn.Conv2d(16, 24, kernel_size=3, padding=1)

self.branch3x3_3 = nn.Conv2d(24, 24, kernel_size=3, padding=1)

self.branch_pool = nn.Conv2d(in_channels, 24, kernel_size=1)

def forward(self, x):

branch1x1 = self.branch1x1(x)

branch5x5 = self.branch5x5_1(x)

branch5x5 = self.branch5x5_2(branch5x5)

branch3x3 = self.branch3x3_1(x)

branch3x3 = self.branch3x3_2(branch3x3)

branch3x3 = self.branch3x3_3(branch3x3)

branch_pool = F.avg_pool2d(x, kernel_size=3, stride=1, padding=1)

branch_pool = self.branch_pool(branch_pool)

outputs = [branch1x1, branch5x5, branch3x3, branch_pool]

return torch.cat(outputs, dim=1) # b,c,w,h c The corresponding is dim=1

class Net(nn.Module):

def __init__(self):

super(Net, self).__init__()

self.conv1 = nn.Conv2d(1, 10, kernel_size=5)

self.conv2 = nn.Conv2d(88, 20, kernel_size=5)

self.incep1 = InceptionA(in_channels=10)

self.incep2 = InceptionA(in_channels=20)

self.mp = nn.MaxPool2d(2)

# there 1408 You can comment out the following sentence and forward The last two sentences in , then print(x.size()) obtain , You can also fill in a number here and wait for an error , Then fill in

self.fc = nn.Linear(1408, 10) #1*28*28 → 10*24*24 → 10*12*12 → 88*12*12 → 20*8*8 → 88*4*4=1408

def forward(self, x):

in_size = x.size(0)

x = F.relu(self.mp(self.conv1(x)))

x = self.incep1(x)

x = F.relu(self.mp(self.conv2(x)))

x = self.incep2(x)

x = x.view(in_size, -1)

x = self.fc(x)

# print(x.size())

return x

model = Net()

Implement case

import torch

import torch.nn as nn

from torchvision import transforms

from torchvision import datasets

from torch.utils.data import DataLoader

import torch.nn.functional as F

import torch.optim as optim

batch_size = 64

transform = transforms.Compose([transforms.ToTensor(), transforms.Normalize((0.1307,), (0.3081,))])

train_dataset = datasets.MNIST(root='./datasets/mnist/', train=True, download=True, transform=transform)

train_loader = DataLoader(train_dataset, shuffle=True, batch_size=batch_size)

test_dataset = datasets.MNIST(root='./datasets/mnist/', train=False, download=True, transform=transform)

test_loader = DataLoader(test_dataset, shuffle=False, batch_size=batch_size)

class InceptionA(nn.Module):

def __init__(self, in_channels):

super(InceptionA, self).__init__()

self.branch1x1 = nn.Conv2d(in_channels, 16, kernel_size=1)

self.branch5x5_1 = nn.Conv2d(in_channels, 16, kernel_size=1)

self.branch5x5_2 = nn.Conv2d(16, 24, kernel_size=5, padding=2)

self.branch3x3_1 = nn.Conv2d(in_channels, 16, kernel_size=1)

self.branch3x3_2 = nn.Conv2d(16, 24, kernel_size=3, padding=1)

self.branch3x3_3 = nn.Conv2d(24, 24, kernel_size=3, padding=1)

self.branch_pool = nn.Conv2d(in_channels, 24, kernel_size=1)

def forward(self, x):

branch1x1 = self.branch1x1(x)

branch5x5 = self.branch5x5_1(x)

branch5x5 = self.branch5x5_2(branch5x5)

branch3x3 = self.branch3x3_1(x)

branch3x3 = self.branch3x3_2(branch3x3)

branch3x3 = self.branch3x3_3(branch3x3)

branch_pool = F.avg_pool2d(x, kernel_size=3, stride=1, padding=1)

branch_pool = self.branch_pool(branch_pool)

outputs = [branch1x1, branch5x5, branch3x3, branch_pool]

return torch.cat(outputs, dim=1) # b,c,w,h c The corresponding is dim=1

class Net(nn.Module):

def __init__(self):

super(Net, self).__init__()

self.conv1 = nn.Conv2d(1, 10, kernel_size=5)

self.conv2 = nn.Conv2d(88, 20, kernel_size=5)

self.incep1 = InceptionA(in_channels=10)

self.incep2 = InceptionA(in_channels=20)

self.mp = nn.MaxPool2d(2)

# there 1408 You can comment out the following sentence and forward The last two sentences in , then print(x.size()) obtain , You can also fill in a number here and wait for an error , Then fill in

self.fc = nn.Linear(1408, 10) #1*28*28 → 10*24*24 → 10*12*12 → 88*12*12 → 20*8*8 → 88*4*4=1408

def forward(self, x):

in_size = x.size(0)

x = F.relu(self.mp(self.conv1(x)))

x = self.incep1(x)

x = F.relu(self.mp(self.conv2(x)))

x = self.incep2(x)

x = x.view(in_size, -1)

x = self.fc(x)

# print(x.size())

return x

model = Net()

criterion = torch.nn.CrossEntropyLoss()

optimizer = optim.SGD(model.parameters(), lr=0.01, momentum=0.5)

def train(epoch):

running_loss = 0.0

for batch_idx, data in enumerate(train_loader, 0):

inputs, target = data

optimizer.zero_grad()

outputs = model(inputs)

loss = criterion(outputs, target)

loss.backward()

optimizer.step()

running_loss += loss.item()

if batch_idx % 300 == 299:

print('[%d, %5d] loss: %.3f' % (epoch+1, batch_idx+1, running_loss/300))

running_loss = 0.0

def test():

correct = 0

total = 0

with torch.no_grad():

for data in test_loader:

images, labels = data

outputs = model(images)

_, predicted = torch.max(outputs.data, dim=1)

total += labels.size(0)

correct += (predicted == labels).sum().item()

print('accuracy on test set: %d %% ' % (100*correct/total))

if __name__ == '__main__':

for epoch in range(2):

train(epoch)

test()

ResNet

Concept

ResNet Also known as residual neural network , It refers to adding residual learning to the traditional convolution neural network (residual learning) Thought , It solves the problem of gradient dispersion and precision decline in deep network ( Training set ) The problem of , Make the network deeper and deeper , The accuracy is guaranteed , And control the speed again .

starting point

With the deepening of the network , The problem of gradient dispersion will become more and more serious , It makes the network difficult or even impossible to converge . By including the initial standardization of the network , Data standardization and middle tier standardization (Batch Normalization) And other methods to solve the gradient dispersion problem . But the deepening of the network will bring another problem : The accuracy of the training set decreases , Here's the picture ,

Residual learning

The idea of residual learning is the above figure , It can be understood as a block, The definition is as follows :

y = F ( x , W i ) + x y = F(x,W_i)+x y=F(x,Wi)+x

Residual learning block There are two branches or two mappings (mapping):

identity mapping, It refers to the curve on the right of the figure above . seeing the name of a thing one thinks of its function ,identity mapping It refers to the mapping of itself , That is to say x x x Oneself ;

residual mapping, It refers to another branch , That is to say F ( x ) F(x) F(x) part , This part is called residual mapping , That is to say y − x y-x y−x .

What if the dimensions are different ?

The author puts forward that in identity mapping Some use 1x1 Convolution , Shown by the following : y = F ( x , W i ) + W s x y = F(x,W_i)+W_sx y=F(x,Wi)+Wsx among , W s W_s Ws refer to 1x1 Convolution operation .

From above , We can see clearly that Solid line and Dotted line Two connections , Solid line Connection part ( The first pink rectangle and the third pink rectangle ) It's all about execution 3x3x64 Convolution of , their channel The number is the same , Therefore, the calculation method is adopted : y = F ( x ) + x y = F(x)+x y=F(x)+x ,

Dashed Connection part ( The first green rectangle and the third green rectangle ) Namely 3x3x64 and 3x3x128 Convolution operation of , their channel The number is different. (64 and 128), among W It's convolution operation , Used to adjust x Of channel dimension , The purpose is to reduce the number of parameters ,

import torch

import torch.nn as nn

from torchvision import transforms

from torchvision import datasets

from torch.utils.data import DataLoader

import torch.nn.functional as F

import torch.optim as optim

batch_size = 64

transform = transforms.Compose([transforms.ToTensor(), transforms.Normalize((0.1307,), (0.3081,))])

train_dataset = datasets.MNIST(root='./datasets/mnist/', train=True, download=True, transform=transform)

train_loader = DataLoader(train_dataset, shuffle=True, batch_size=batch_size)

test_dataset = datasets.MNIST(root='./datasets/mnist/', train=False, download=True, transform=transform)

test_loader = DataLoader(test_dataset, shuffle=False, batch_size=batch_size)

class ResidualBlock(torch.nn.Module):

def __init__(self, channels):

super(ResidualBlock, self).__init__()

self.channels = channels

self.cov1 = torch.nn.Conv2d(channels, channels,

kernel_size = 3, padding = 1)

self.cov2 = torch.nn.Conv2d(channels, channels,

kernel_size = 3, padding = 1))

def forward(self, x):

y = F.relu(self.cov1(x))

y = self.conv2(y)

return F.relue(x+y)

model = Model()

class Net(nn.Module):

def __init__(self):

super(Net, self).__init__()

self.conv1 = nn.Conv2d(1, 16, kernel_size=5)

self.conv2 = nn.Conv2d(16, 32, kernel_size=5)

self.mp = nn.MaxPool2d(2)

self.rblock1 = ResidualBlock(16)

self.rblock2 = ResidualBlock(16)

self.fc = nn.Linear(512, 10)

def forward(self, x):

in_size = x.size(0)

x = F.relu(self.mp(self.conv1(x)))

x = self.incep1(x)

x = F.relu(self.mp(self.conv2(x)))

x = self.incep2(x)

x = x.view(in_size, -1)

x = self.fc(x)

# print(x.size())

return x

model = Net()

criterion = torch.nn.CrossEntropyLoss()

optimizer = optim.SGD(model.parameters(), lr=0.01, momentum=0.5)

def train(epoch):

running_loss = 0.0

for batch_idx, data in enumerate(train_loader, 0):

inputs, target = data

optimizer.zero_grad()

outputs = model(inputs)

loss = criterion(outputs, target)

loss.backward()

optimizer.step()

running_loss += loss.item()

if batch_idx % 300 == 299:

print('[%d, %5d] loss: %.3f' % (epoch+1, batch_idx+1, running_loss/300))

running_loss = 0.0

def test():

correct = 0

total = 0

with torch.no_grad():

for data in test_loader:

images, labels = data

outputs = model(images)

_, predicted = torch.max(outputs.data, dim=1)

total += labels.size(0)

correct += (predicted == labels).sum().item()

print('accuracy on test set: %d %% ' % (100*correct/total))

if __name__ == '__main__':

for epoch in range(2):

train(epoch)

test()

Share the Bible and ResNet Of PDF Files for everyone to download .

https://www.aliyundrive.com/s/WpjJgDMqtfK

Click the link to save , Or copy this paragraph , open 「 Alicloud disk 」APP , No need to download speed online view , The original video is played at double speed .

Reference documents : Residual Learning For Image Recognition.

边栏推荐

- Transferring multiple pictures is the judgment of empty situation.

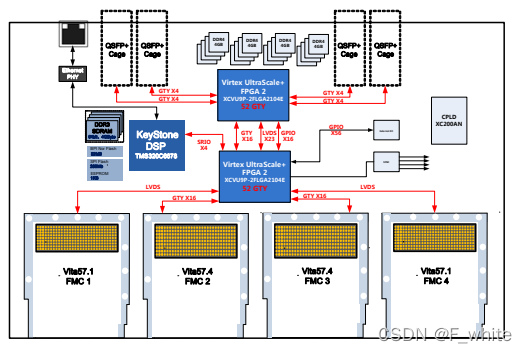

- FMC sub card: 8-channel 125msps sampling rate 16 bit AD acquisition sub card

- redis 存储结构原理 2

- Redis源码分析之双索引机制

- INSTALL_PARSE_FAILED_MANIFEST_MALFORMED

- 会话技术【黑马入门系列】

- Telnet installation

- MongoDB的使用

- Administrator blocked this app from running

- 理解LSTM和GRU

猜你喜欢

Download, installation and use of mongodb

This article introduces you to SOA interface testing

一文带你了解SOA接口测试

4-channel fmc+ baseband signal processing board (4-channel 2G instantaneous bandwidth ad+da)

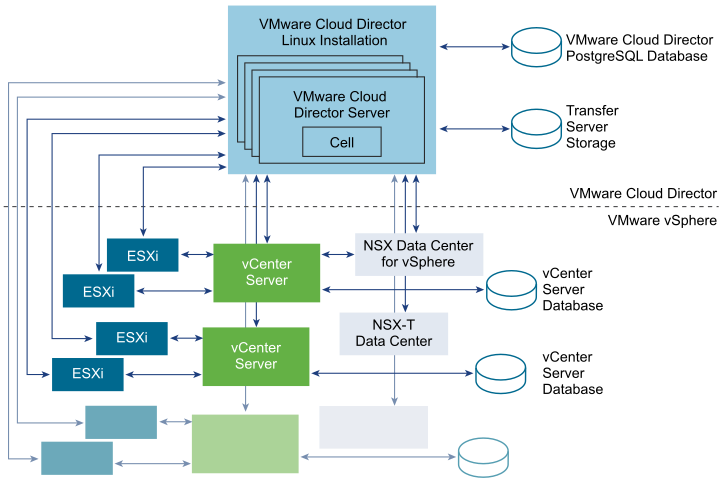

VMware cloud director 10.4 release (including download) - cloud computing provisioning and management platform

修改滚动条样式

xgboos-hperopt

Modify checkbox style

Redis源码分析之双索引机制

修改radio样式

随机推荐

Pytorch随记(3)

@ConditionalOnMissingBean 如何实现覆盖第三方组件中的 Bean

Administrator blocked this app from running

Object detection and bounding box

FMC sub card: 8-channel 125msps sampling rate 16 bit AD acquisition sub card

Promote trust in the digital world

Modify checkbox style

Jenkins 忘记密码怎么办?

V2X测试系列之认识V2X第二阶段应用场景

fiddler 抓包工具使用

PCIe bus architecture high performance data preprocessing board / K7 325t FMC interface data acquisition and transmission card

xgboos-hperopt

修改select样式

Use Altium designer software to draw a design based on stm32

Development board training: multi task program under stm32

MongoDB 索引

Basic lighting knowledge of shader introduction

Pycharm interface settings

MongoDB的使用

目标检测和边界框