KAIST VIO dataset

This is the dataset for testing the robustness of various VO/VIO methods

You can download the whole dataset on KAIST VIO dataset

Index

1. Trajectories

2. Downloads

3. Dataset format

4. Setup

1. Trajectories

- Four different trajectories: circle, infinity, square, and pure_rotation.

- Each trajectory has three types of sequence: normal speed, fast speed, and rotation.

- The pure rotation sequence has only normal speed, fast speed types

2. Downloads

You can download a single ROS bag file from the link below. (or whole dataset from KAIST VIO dataset)

| Trajectory | Type | ROS bag download |

|---|---|---|

| circle | normal fast rotation |

link link link |

| infinity | normal fast rotation |

link link link |

| square | normal fast rotation |

link link link |

| rotation | normal fast |

link link |

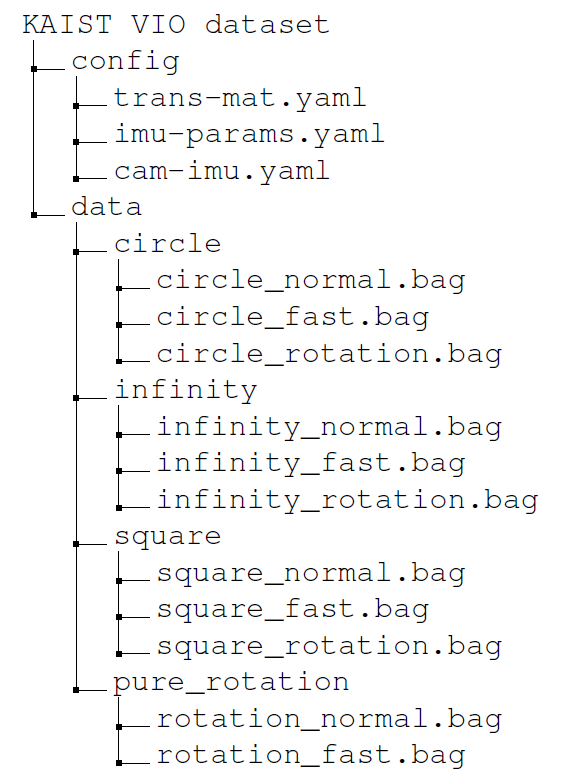

3. Dataset format

- Each set of data is recorded as a ROS bag file.

- Each data sequence contains the followings:

- stereo infra images (w/ emitter turned off)

- mono RGB image

- IMU data (3-axes accelerometer, 3-axes gyroscopes)

- 6-DOF Ground-Truth

- ROS topic

- Camera(30 Hz): "/camera/infra1(2)/image_rect_raw/compressed", "/camera/color/image_raw/compressed"

- IMU(100 Hz): "/mavros/imu/data"

- Ground-Truth(50 Hz): "/pose_transformed"

- In the config directory

- trans-mat.yaml: translational matrix between the origin of the Ground-Truth and the VI sensor unit.

(the offset has already been applied to the bag data, and this YAML file has estimated offset values, just for reference. To benchmark your VO/VIO method more accurately, you can use your alignment method with other tools, like origin alignment or Umeyama alignment from evo) - imu-params.yaml: estimated noise parameters of Pixhawk 4 mini

- cam-imu.yaml: Camera intrinsics, Camera-IMU extrinsics in kalibr format

- trans-mat.yaml: translational matrix between the origin of the Ground-Truth and the VI sensor unit.

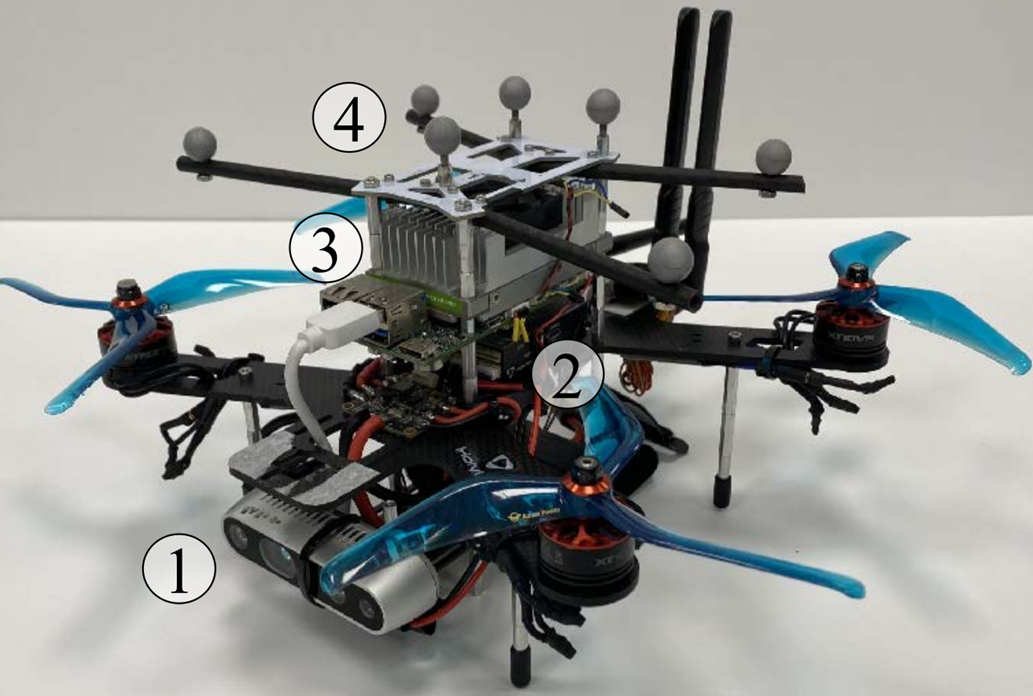

4. Setup

- Hardware

Fig.1 Lab Environment Fig.2 UAV platform

- VI sensor unit

- camera: Intel Realsense D435i (640x480 for infra 1,2 & RGB images)

- IMU: Pixhawk 4 mini

- VI sensor unit was calibrated by using kalibr

- Ground-Truth

- OptiTrack PrimeX 13 motion capture system with six cameras was used

- including 6-DOF motion information.

- Software (VO/VIO Algorithms): How to set each (publicly available) algorithm on the jetson board

| VO/VIO | Setup link |

|---|---|

| VINS-Mono | link |

| ROVIO | link |

| VINS-Fusion | link |

| Stereo-MSCKF | link |

| Kimera | link |

5. Citing

If you use the dataset in an academic context, please cite the following publication:

@article{jeon2021run,

title={Run Your Visual-Inertial Odometry on NVIDIA Jetson: Benchmark Tests on a Micro Aerial Vehicle},

author={Jeon, Jinwoo and Jung, Sungwook and Lee, Eungchang and Choi, Duckyu and Myung, Hyun},

journal={arXiv preprint arXiv:2103.01655},

year={2021}

}

6. Lisence

This datasets are released under the Creative Commons license (CC BY-NC-SA 3.0), which is free for non-commercial use (including research).