当前位置:网站首页>李沐d2l(四)---Softmax回归

李沐d2l(四)---Softmax回归

2022-07-26 08:57:00 【madkeyboard】

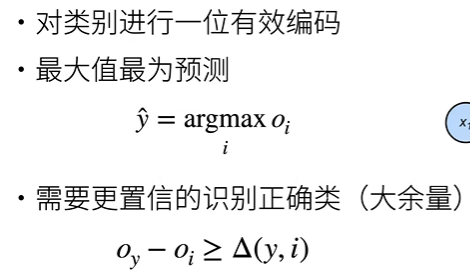

一、Softmax回归

回归VS分类

回归估计一个连续值

分类预测一个离散类别

均方损失

无校验比例

交叉熵损失

Softmax回归是一个多类分类模型。使用Softmax操作子得到每个类的预测置信度。使用交叉熵来衡量预测和标号的区别。

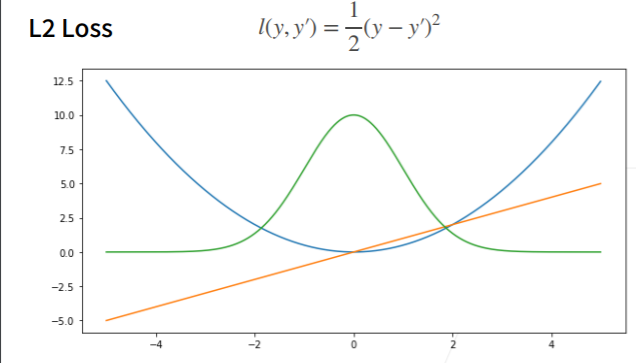

二、损失函数

均方损失

蓝色曲线代表y=0时,变化y’得到的函数

绿色为似然函数,e-L

橙色为损失函数的梯度

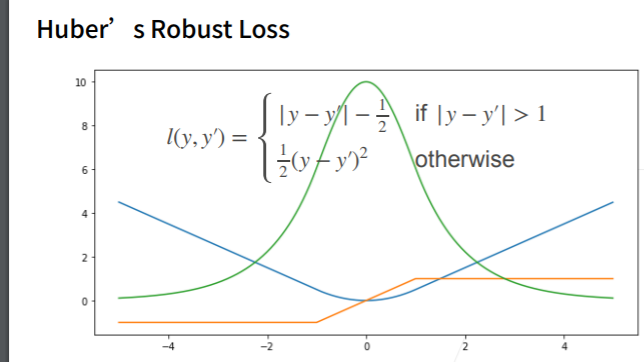

绝对值损失

Huber’s Robust Loss

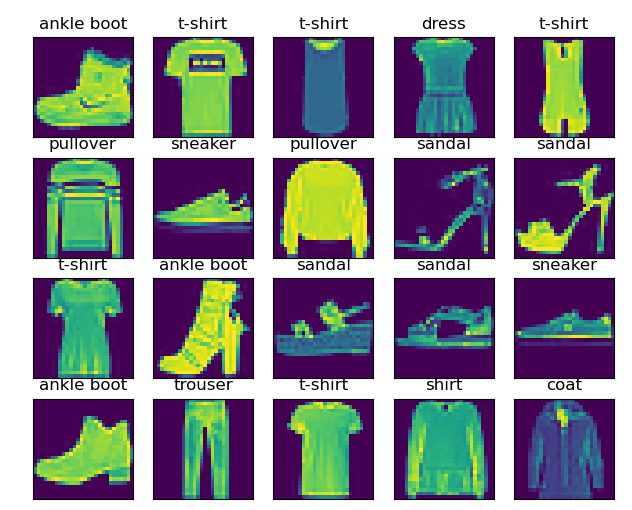

三、图像分类数据集

import torch

import torchvision

from torch.utils import data

from torchvision import transforms

from d2l import torch as d2l

d2l.use_svg_display()

# 1 下载数据存储到内存中

trans = transforms.ToTensor() # 把图片转换为tensor

mnist_train = torchvision.datasets.FashionMNIST(

root="FashionMNIST",

train=True, # 下载的是训练数据集

transform=trans, # 表示拿出来时得到的是tensor,而不是图片

)

mnist_test = torchvision.datasets.FashionMNIST( # 测试数据集不参与训练,用来预测模型的好坏

root="FashionMNIST",

train=False,

transform=trans,

)

# 2 可视化数据集

def get_fashion_mnist_labels(labels):

text_labels = [

't-shirt', 'trouser', 'pullover', 'dress', 'coat', 'sandal', 'shirt',

'sneaker', 'bag', 'ankle boot'

]

return [text_labels[int(i)] for i in labels]

def show_images(imgs, num_rows, num_cols, titles=None, scale=1.5):

figsize = (num_cols * scale, num_rows * scale)

_, axes = d2l.plt.subplots(num_rows, num_cols, figsize=figsize)

axes = axes.flatten()

for i, (ax, img) in enumerate(zip(axes, imgs)):

if torch.is_tensor(img):

ax.imshow(img.numpy()) # 图片张量

else:

ax.imshow(img) # PIL图片

ax.axes.get_xaxis().set_visible(False)

ax.axes.get_yaxis().set_visible(False)

if titles:

ax.set_title(titles[i])

d2l.plt.show()

return axes

X, y = next(iter(data.DataLoader(mnist_train, batch_size=20)))

show_images(X.reshape(20,28,28), 4, 5, titles=get_fashion_mnist_labels(y))

# 3 读取一小批量数据

batch_size = 256

def get_dataloader_workers():

return 4 # 使用4个进程来读取数据

train_iter = data.DataLoader(mnist_train, batch_size, shuffle=True, num_workers=get_dataloader_workers())

timer = d2l.Timer()

for X, y in train_iter:

continue

print(f'{

timer.stop():.2f} sec') # 2.88sec

进行封装,方便以后复用

def load_data_fashion_mnist(batch_size, resize=None): # resize方便自定义图片的大小

"""下载Fashion-MNIST数据集,然后将其加载到内存中。"""

trans = [transforms.ToTensor()]

if resize:

trans.insert(0, transforms.Resize(resize))

trans = transforms.Compose(trans)

mnist_train = torchvision.datasets.FashionMNIST(root="../data",

train=True,

transform=trans,

download=True)

mnist_test = torchvision.datasets.FashionMNIST(root="../data",

train=False,

transform=trans,

download=True)

return (data.DataLoader(mnist_train, batch_size, shuffle=True,

num_workers=get_dataloader_workers()),

data.DataLoader(mnist_test, batch_size, shuffle=False,

num_workers=get_dataloader_workers()))

train_iter, test_iter = load_data_fashion_mnist(32, resize=64)

for X, y in train_iter:

print(X.shape, X.dtype, y.shape, y.dtype)

break

四、softmax回归的从零开始实现

import torch

from IPython import display

from d2l import torch as d2l

# 1 定义图片数量,获得训练集和测试集

batch_size = 256

train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size)

# 2 展开每个图像,将他们视为长度为784的向量,由于数据集有10个类别,所以网络输出维度为10

num_inputs = 784 # 28 * 28

num_outputs = 10

w = torch.normal(0, 0.01, size=(num_inputs, num_outputs), requires_grad=True) # 权重

b = torch.zeros(num_outputs, requires_grad=True) # 偏移

# 3 定义softmax

def softmax(X):

X_exp = torch.exp(X)

patition = X_exp.sum(1, keepdim=True)

return X_exp / patition # 应用了广播机制

# 检验

# X = torch.normal(0, 1, (2,5))

# x_prob = softmax(X)

# print(x_prob, x_prob.sum(1))

''' 每行总和为1 tensor([[0.2055, 0.1315, 0.0336, 0.0758, 0.5536], [0.1539, 0.0319, 0.0362, 0.6295, 0.1485]]) tensor([1., 1.]) '''

# 4 实现模型

def net(X):

return softmax(torch.matmul(X.reshape((-1, w.shape[0])),w) + b)

# 5 实现交叉熵损失

# 例子

# y = torch.tensor([0, 2]) # 创建一个张量

# y_hat = torch.tensor([[0.1, 0.3, 0.6], [0.3, 0.2, 0.5]]) # 给出预测值

# print(y_hat[0,1],y)

''' tensor([0.1000, 0.5000]) '''

def cross_entropy(y_hat, y):

return -torch.log(y_hat[range(len(y_hat)), y])

# 6 将预测类别与真实y元素进行比较

def accuracy(y_hat, y):

if len(y_hat.shape) > 1 and y_hat.shape[1] > 1:

y_hat = y_hat.argmax(axis=1)

cmp = y_hat.type(y.dtype) == y

return float(cmp.type(y.dtype).sum())

# 7 评估任意模型net的准确率

class Accumulator:

"""在`n`个变量上累加。"""

def __init__(self, n):

self.data = [0.0] * n

def add(self, *args):

self.data = [a + float(b) for a, b in zip(self.data, args)]

def reset(self):

self.data = [0.0] * len(self.data)

def __getitem__(self, idx):

return self.data[idx]

def evaluate_accuracy(net, data_iter):

if isinstance(net, torch.nn.Module):

net.eval() # 将模型设置为评估模式

metric = Accumulator(2) #正确预测数,预测总数

for X, y in data_iter:

metric.add(accuracy(net(X), y), y.numel())

return metric[0] / metric[1]

# 8 softmax回归训练

def train_epoch_ch3(net, train_iter, loss, updater):

if isinstance(net, torch.nn.Module):

net.train()

metric = Accumulator(3)

for X, y in train_iter:

y_hat = net(X)

l = loss(y_hat, y)

if isinstance(updater, torch.optim.Optimizer):

updater.zero_grad()

l.backward()

updater.step()

metric.add(

float(l) * len(y), accuracy(y_hat, y),

y.size().numel())

else:

l.sum().backward()

updater(X.shape[0])

metric.add(float(l.sum()), accuracy(y_hat, y), y.numel())

return metric[0] / metric[2], metric[1] / metric[2]

# 9 画图类

class Animator:

"""在动画中绘制数据。"""

def __init__(self, xlabel=None, ylabel=None, legend=None, xlim=None,

ylim=None, xscale='linear', yscale='linear',

fmts=('-', 'm--', 'g-.', 'r:'), nrows=1, ncols=1,

figsize=(3.5, 2.5)):

if legend is None:

legend = []

d2l.use_svg_display()

self.fig, self.axes = d2l.plt.subplots(nrows, ncols, figsize=figsize)

if nrows * ncols == 1:

self.axes = [self.axes,]

self.config_axes = lambda: d2l.set_axes(self.axes[

0], xlabel, ylabel, xlim, ylim, xscale, yscale, legend)

self.X, self.Y, self.fmts = None, None, fmts

def add(self, x, y):

if not hasattr(y, "__len__"):

y = [y]

n = len(y)

if not hasattr(x, "__len__"):

x = [x] * n

if not self.X:

self.X = [[] for _ in range(n)]

if not self.Y:

self.Y = [[] for _ in range(n)]

for i, (a, b) in enumerate(zip(x, y)):

if a is not None and b is not None:

self.X[i].append(a)

self.Y[i].append(b)

self.axes[0].cla()

for x, y, fmt in zip(self.X, self.Y, self.fmts):

self.axes[0].plot(x, y, fmt)

self.config_axes()

display.display(self.fig)

display.clear_output(wait=True)

# 10 训练函数

def train_ch3(net, train_iter, test_iter, loss, num_epochs, updater):

animator = Animator(xlabel='epoch', xlim=[1, num_epochs], ylim=[0.3, 0.9],

legend=['train loss', 'train acc', 'test acc'])

for epoch in range(num_epochs):

train_metrics = train_epoch_ch3(net, train_iter, loss, updater)

test_acc = evaluate_accuracy(net, test_iter)

animator.add(epoch + 1, train_metrics + (test_acc,))

train_loss, train_acc = train_metrics

assert train_loss < 0.5, train_loss

assert train_acc <= 1 and train_acc > 0.7, train_acc

assert test_acc <= 1 and test_acc > 0.7, test_acc

# 11 定义小批量随机梯度来优化模型的损失函数

lr = 0.1

def updater(batch_size):

return d2l.sgd([w,b], lr, batch_size)

# 12 开始训练

num_epochs = 10

train_ch3(net, train_iter, test_iter, cross_entropy, num_epochs, updater)

d2l.plt.show()

进行预测

def predict_ch3(net, test_iter, n=6):

"""预测标签(定义见第3章)。"""

for X, y in test_iter:

break

trues = d2l.get_fashion_mnist_labels(y)

preds = d2l.get_fashion_mnist_labels(net(X).argmax(axis=1))

titles = [true + '\n' + pred for true, pred in zip(trues, preds)]

d2l.show_images(X[0:n].reshape((n, 28, 28)), 1, n, titles=titles[0:n])

predict_ch3(net, test_iter)

d2l.plt.show()

五、softmax的简洁实现

import torch

from torch import nn

from d2l import torch as d2l

import os

os.environ["KMP_DUPLICATE_LIB_OK"]="TRUE"

# 1 定义图片数量,获得训练集和测试集

batch_size = 256

train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size)

# 2 pytoch不会隐式的调整输入的形状,所以需要定义展平层在线性层前调整网络输入的形状

net = nn.Sequential(nn.Flatten(), nn.Linear(784, 10))

def init_weights(m):

if type(m) == nn.Linear:

nn.init.normal_(m.weight, std=0.01)

net.apply(init_weights)

# 3 在交叉熵损失函数中传递μ归一化的预测,并同时计算softmax及其对数

loss = nn.CrossEntropyLoss(reduction='none')

# 4 使用学习率为0.1的小批量随机梯度下降作为优化算法

trainer = torch.optim.SGD(net.parameters(), lr=0.1)

# 5 调用之前定义的训练函数来训练模型

num_epochs = 10

d2l.train_ch3(net, train_iter, test_iter, loss, num_epochs, trainer)

d2l.plt.show()

边栏推荐

- Day06 homework -- skill question 2

- Web概述和B/S架构

- 深度学习常用激活函数总结

- Matlab 绘制阴影误差图

- Review notes of Microcomputer Principles -- zoufengxing

- day06 作业--增删改查

- Introduction to AWD attack and defense competition

- 十大蓝筹NFT近半年数据横向对比

- Cve-2021-26295 Apache OFBiz deserialization Remote Code Execution Vulnerability recurrence

- My meeting of OA project (meeting seating & submission for approval)

猜你喜欢

day06 作业--技能题2

Numpy Foundation

tcp 解决short write问题

公告 | FISCO BCOS v3.0-rc4发布,新增Max版,可支撑海量交易上链

2022化工自动化控制仪表操作证考试题模拟考试平台操作

Sklearn machine learning foundation (linear regression, under fitting, over fitting, ridge regression, model loading and saving)

《Datawhale熊猫书》出版了!

Set of pl/sql

220. Presence of repeating element III

Study notes of automatic control principle --- stability analysis of control system

随机推荐

pl/sql之动态sql与异常

Overview of motion recognition evaluation

Nuxt - 项目打包部署及上线到服务器流程(SSR 服务端渲染)

2022流动式起重机司机考试题模拟考试题库模拟考试平台操作

合工大苍穹战队视觉组培训Day5——机器学习,图像识别项目

209. Subarray with the smallest length

Vision Group Training Day5 - machine learning, image recognition project

高数 | 武爷『经典系列』每日一题思路及易错点总结

at、crontab

分布式跟踪系统选型与实践

Pan micro e-cology8 foreground SQL injection POC

node-v下载与应用、ES6模块导入与导出

海内外媒体宣发自媒体发稿要严格把握内容关

Recurrence of SQL injection vulnerability in the foreground of a 60 terminal security management system

力扣题DFS

【LeetCode数据库1050】合作过至少三次的演员和导演(简单题)

【ARKit、RealityKit】把图片转为3D模型

pl/sql之集合

The lessons of 2000. Web3 = the third industrial revolution?

Mutual transformation of array structure and tree structure