当前位置:网站首页>SQL优化

SQL优化

2022-07-17 08:31:00 【毕竟尹稳健】

SQL优化

插入数据:

- 批量插入

insert into tb_test values(1,'tom'),(2,'cat'),(3,'jerry');

- 手动提交事务

start transaction;

insert into tb_test values(1,'tom'),(2,'cat'),(3,'jerry');

commit;

- 主键顺序插入

建议顺序插入,性能比乱序插入好

- 大批量插入数据

如果一次性需要插入大批量数据,使用insert语句插入性能较低,此时可以使用MySQL数据库提供的load指令进行插入。操作如下:

# 客户端连接服务端时,加上参数 --local-infile

mysql --local-infile -u root -p

# 设置全局参数local_infile 为1,开启从本地加载文件导入数据的开关

set global local_infile = 1;

# 执行load指令将准备好的数据,加载到表结构中

load data local infile '/root/sql1.log' into table `tb_user` fields terminated by ',' lines terminated by '\n';

博客连接:https://www.cnblogs.com/yuejucai/archive/2018/08/23/9526015.html

主键优化:

- 数据组织方式

在InnoDB存储引擎中,表数据都是根据主键顺序组织存放的,这种存储方式的表称为索引组织表(index organized table IOT)。

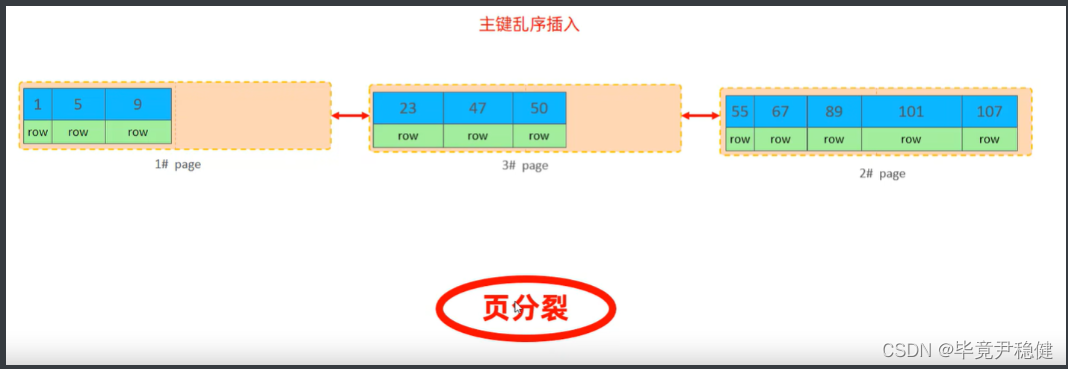

- 页分裂

页可以为空,也可以填充一半,也可以填充100%。每个页包含了2-N行数据(如果一行数据多大,会行溢出),根据主键排列。

主键顺序插入:

主键乱序插入:

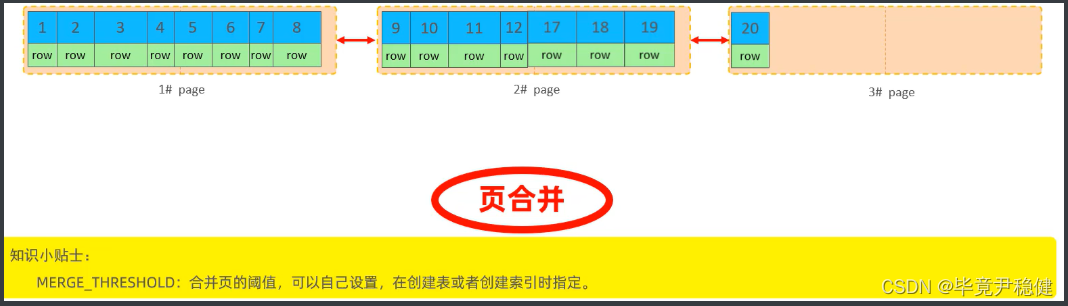

- 页合并:

当删除一行记录时,实际上记录并没有被物理删除,只是记录被标记(flaged)为删除并且它的空间变得允许被其他记录声明使用。

当页中删除的记录达到MERGE_THRESHOLD(默认为页的50%),InnoDB会开始寻找最靠近的页(前或后)看看是否可以将两个页合并以优化空间使用。

- 主键设计原则

- 满足业务需求的情况下,尽量降低主键的长度

- 插入数据时,尽量选择顺序插入,选择使用AUTO_INCREMENT自增主键

- 尽量不要使用UUID作主键或这是其他自然主键,如身份证号

- 业务操作时,避免对主键的修改

这里为什么尽量不要主键太长嫩?

因为InnoDB存储引擎有个辅助索引,他的叶子节点存储的是表的主键,如果太长会占用磁盘空间

order by 优化:

1、Using filesort:通过表的索引或全表扫描,读取满足条件的数据行,然后在排序缓冲区sort buffer中完成排序操作,所有不是通过索引直接返回排序结果的排序都叫FileSort 排序。

2、Using index:通过有序索引顺序扫描直接返回有序数据,这种情况即为using index,不需要额外排序,操作效率高。

mysql> explain select id ,name ,score from iqiyi order by score;

+----+-------------+-------+------------+------+---------------+------+---------+------+------+----------+----------------+

| id | select_type | table | partitions | type | possible_keys | key | key_len | ref | rows | filtered | Extra |

+----+-------------+-------+------------+------+---------------+------+---------+------+------+----------+----------------+

| 1 | SIMPLE | iqiyi | NULL | ALL | NULL | NULL | NULL | NULL | 2273 | 100.00 | Using filesort |

+----+-------------+-------+------------+------+---------------+------+---------+------+------+----------+----------------+

1 row in set, 1 warning (0.00 sec)

创建索引后:

mysql> create index idx_iqiyi_score on iqiyi(score);

# 这里还可以指定索引是升序还是降序

mysql> create index idx_iqiyi_score_desc on iqiyi(score desc);

Query OK, 0 rows affected (0.04 sec)

Records: 0 Duplicates: 0 Warnings: 0

mysql> explain select id ,name ,score from iqiyi order by score;

+----+-------------+-------+------------+------+---------------+------+---------+------+------+----------+----------------+

| id | select_type | table | partitions | type | possible_keys | key | key_len | ref | rows | filtered | Extra |

+----+-------------+-------+------------+------+---------------+------+---------+------+------+----------+----------------+

| 1 | SIMPLE | iqiyi | NULL | ALL | NULL | NULL | NULL | NULL | 2273 | 100.00 | Using filesort |

+----+-------------+-------+------------+------+---------------+------+---------+------+------+----------+----------------+

1 row in set, 1 warning (0.00 sec)

mysql> explain select id ,score from iqiyi order by score desc;

+----+-------------+-------+------------+-------+---------------+----------------------+---------+------+------+----------+-------------+

| id | select_type | table | partitions | type | possible_keys | key | key_len | ref | rows | filtered | Extra |

+----+-------------+-------+------------+-------+---------------+----------------------+---------+------+------+----------+-------------+

| 1 | SIMPLE | iqiyi | NULL | index | NULL | idx_iqiyi_score_desc | 6 | NULL | 2273 | 100.00 | Using index |

+----+-------------+-------+------------+-------+---------------+----------------------+---------+------+------+----------+-------------+

1 row in set, 1 warning (0.00 sec)

mysql> explain select id ,score from iqiyi order by score asc;

+----+-------------+-------+------------+-------+---------------+-----------------+---------+------+------+----------+-------------+

| id | select_type | table | partitions | type | possible_keys | key | key_len | ref | rows | filtered | Extra |

+----+-------------+-------+------------+-------+---------------+-----------------+---------+------+------+----------+-------------+

| 1 | SIMPLE | iqiyi | NULL | index | NULL | idx_iqiyi_score | 6 | NULL | 2273 | 100.00 | Using index |

+----+-------------+-------+------------+-------+---------------+-----------------+---------+------+------+----------+-------------+

1 row in set, 1 warning (0.00 sec)

创建索引优化语法:

# 根据age,phone进行降序一个升序,一个降序

explain select id,age,phone from tb_user order by age asc,phone desc;

# 创建索引

create index idx_user_age_phone_ad on tb_user(age asc,phone desc);

# 根据age,phone进行降序一个升序,一个降序

explain select id,age,phone from tb_user order by age asc,phone desc;

小结:

- 根据排序字段建立合适的索引,多字段排序时,也遵循最左前缀法则

- 尽量使用覆盖索引

- 多字段排序,一个升序一个降序,此时需要注意联合索引在创建时的规则(asc/desc)

- 如果不可避免的出现filesort,大数据量排序时,可以适当的增大排序缓冲区大小sort_buffer_size(默认是256k)

group by 优化:

在分组操作时,可以通过索引来提高效率

在分组操作时,索引的使用也是满足最左前缀法则的

# 没使用到索引时

mysql> explain select job_education, count(*) from chinahr group by job_education;

+----+-------------+---------+------------+------+---------------+------+---------+------+------+----------+-----------------+

| id | select_type | table | partitions | type | possible_keys | key | key_len | ref | rows | filtered | Extra |

+----+-------------+---------+------------+------+---------------+------+---------+------+------+----------+-----------------+

| 1 | SIMPLE | chinahr | NULL | ALL | NULL | NULL | NULL | NULL | 9735 | 100.00 | Using temporary |

+----+-------------+---------+------------+------+---------------+------+---------+------+------+----------+-----------------+

1 row in set, 1 warning (0.00 sec)

创建索引:

mysql> create index idx_chinahr_job_education on chinahr(job_education);

Query OK, 0 rows affected (0.10 sec)

Records: 0 Duplicates: 0 Warnings: 0

mysql> explain select job_education, count(*) from chinahr group by job_education;

+----+-------------+---------+------------+-------+---------------------------+---------------------------+---------+------+------+----------+-------------+

| id | select_type | table | partitions | type | possible_keys | key | key_len | ref | rows | filtered | Extra |

+----+-------------+---------+------------+-------+---------------------------+---------------------------+---------+------+------+----------+-------------+

| 1 | SIMPLE | chinahr | NULL | index | idx_chinahr_job_education | idx_chinahr_job_education | 1023 | NULL | 9735 | 100.00 | Using index |

+----+-------------+---------+------------+-------+---------------------------+---------------------------+---------+------+------+----------+-------------+

1 row in set, 1 warning (0.00 sec)

limit 优化:

一个常见又非常头疼的问题就是limit 2000000,10,此时需要MySQL排序前2000010记录,仅仅返回2000000 - 2000010的记录,其他记录丢弃,查询排序的代价非常大。

优化思路:一般分页查询时,通过创建覆盖索引能够比较好地提高性能,可以通过覆盖索引加子查询形式进行优化。

mysql> select * from job limit 100000,10;

10 rows in set (0.14 sec)

mysql> select job_id from job limit 100000,10;

10 rows in set (0.11 sec)

mysql> select * from job_id where job_id in (select job_id from job limit 100000,10);

ERROR 1235 (42000): This version of MySQL doesn't yet support 'LIMIT & IN/ALL/ANY/SOME subquery;

mysql> select * from job j,(select job_id from job limit 100000,10) ji where j.job_id = ji.job_id;

10 rows in set (0.09 sec)

count 优化:

- MyISAM引擎把一个表的总行数存在了磁盘中,因此执行count(*)的时候会直接返回这个数,效率很高,但是加了where条件就会很慢

- InnoDB引擎就麻烦了,他执行count(*)的时候,需要把数据一行一行的从引擎里面读出来,然后累计技术。

优化思路:自己计数

count()是一个聚合函数,对于返回的结果集,一行行的判断,如果count函数的参数不是NULL,累计值就加1,否则不加,最后返回累计值。

用法:count(*) 、count(主键)、count(字段)、count(1)

- count(主键)

InnoDB引擎会遍历整张表,把每一行的主键id值都取出来,返回给服务层。服务层拿到主键后,直接按行进行累加(主键不可能为nul)。

- count(字段)

没有not null约束:InnoDB引擎会遍历整张表把每一行的字段值都取出来,返回给服务层,服务层判断是否为null,不为null,计数累加。有not null约束: InnoDB引擎会遍历整张表把每一行的字段值都取出来,返回给服务层,直接按行进行累加。

- count(1)

InnoDB引擎遍历整张表,但不取值。服务层对于返回的每一行,放一个数字“1”进去,直接按行进行累加。

- count(*)

InnoDB引擎并不会把全部字段取出来,而是专门做了优化,不取值,服务层直接按行进行累加。

按效率排序的话,count(字段)<count(主键id)<count(1)≈count(*),

所以尽量使用count(*)

update优化:

InnoDB的行锁是针对索引加的锁,不是针对记录加的锁,并且该索引不能失效,否则会从行锁升级为表锁。

边栏推荐

- New application of arm computing, illegal fishing "catch all"

- Introduction to flutter flutter calculates the last 1 month, 3 months, half a year, 12 months

- Redis6 new data type - hyperloglog

- 6-9漏洞利用-Telnet登陆提权

- Build an embedded development environment

- 用于语义分割的Hausdorff损失函数

- MySQL中查询一个字符串字段的值不为空到底该怎么写?

- ROS common toolkit commands

- JS learning notes 06-08: traversal of arrays and four methods of arrays

- Opportunities and challenges of Brazilian mobile game Investment Agency

猜你喜欢

6-9漏洞利用-Telnet登陆提权

matlab导入小数点后9位以上的浮点数

分布式事务-可靠消息最终一致性解决方案

深度学习第四周Key Concepts on Deep Neural Networks习题整理

【手写数字识别】基于Lenet网络实现手写数字识别附matlab代码

Detailed explanation of ansible automatic operation and maintenance (IV) preparation, use, command execution and example demonstration of playbook in ansible

xgen 毛发guide历史被清理解决方法

Redis introduction

Graphite thickness measurement

Redis6 new data type geospatial

随机推荐

5g at that time, where will driverless driving go in the future?

mongodb $符号的神奇用法+mongo数据类型

JSON under mysql8

JS learning notes 06-08: traversal of arrays and four methods of arrays

深度学习之线性回归+基础优化

JS learning notes 01-03 - Reference of this, global scope, method

RestTemplate

What is memory overflow

JS学习笔记04-05——构造函数的修改以及使用工厂方法创建

Graphite thickness measurement

Event loop, macro task, micro task

Convex mirror 3D glass contour scanning

事件循环、宏任务、微任务

Redis publishing and subscription

深度学习第三周Shallow Neural Networks习题整理

力扣382链表随机节点笔记

Example description of alternative writing of instanceof

ROS常用工具包命令

一文,教你实现单点登录

Gateway new generation gateway